Empirical Evaluation of Interactive Visualizations for Preferential Choice

An Empirical Evaluation of Interactive Visualizations for Preferential Choice by Jeanette Bautista and Giuseppe Carenini

Short Description

The authors of this paper tried to not only show the usefulness of Value Charts to support preferential choice, which is finding the best option out of a set of alternatives. Furthermore, they compared two types of Value Charts: a horizontal version against a vertical version. The outcome of this extensive user study was that Value Charts in general and in particular Vertical Value Charts (abbreviated VC+V) seemed to be very effective in supporting decision making.

Process of decision making

The process of effective preferential choice can be divided into 3 steps according to prescriptive decision theory.

Phase 1 / Model construction phase: the decision maker (abbr. DM) finds objectives, which are important to him/her. The degree of importance is also chosen.

Phase 2 / Inspection phase: DM analyzes his/her preference model as applied to a set of alternatives.

Phase 3 / Sensitivity analysis: DM is able to answer "what if" questions - such as "if we make a slight change in one or more aspects of the model, does it effect the optimal decision?"

ValueCharts+

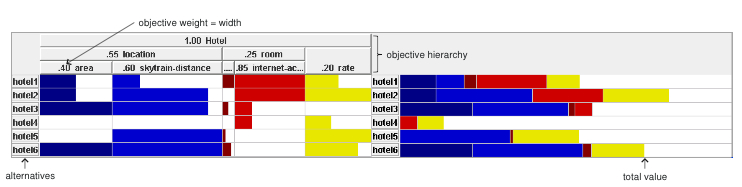

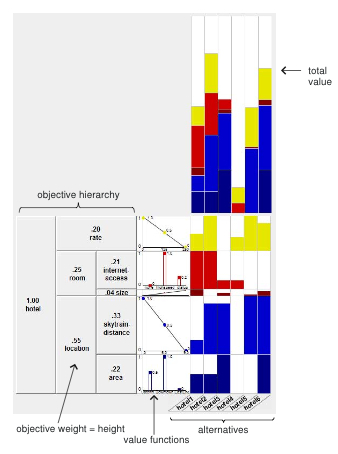

ValueChart+is a set of interactive visualization techniques for preferential choice and is an improvement of ValueCharts. It supports the DM in the 3 phases described above. In an Additive Multiattribute Value Function the DM’s objectives are hierarchically organized. In VC+ this hierarchy is displayed as an exploded stacked-bar. The ValueChart+ follows the information-seeking mantra by Ben Shneiderman: overview first, zoom and filter, then details on demand.

Figures

Shown above is the horizontal version of a ValueChart+ presented by Bautista et Carenini

Shown above is the vertical version of a ValueChart+ presented by Bautista et Carenini.

The vertical height of each row indicates the relative weight assigned to each objective (e.g., size is much less important than internet-access). Each column represents an alternative, thus each cell portrays an objective corresponding to an alternative (bottom-right quadrant). The amount of filled color relative to cell size depicts the alternative’s preference.

Important Citation(s)

Suitable for which data types

Suitable for different domains, where preferential choice objective values are possible to be quantized.

Evaluation Part A: Controlled Study

In Part A, the authors took a quantitative approach by performing a controlled usability study to see how users performed the primitive tasks of the PVIT (Preferential Choice Visualization Integrated Task Model). Part A starts in the sensitivity analysis phase. The task is the following: 5 questions (based on the PVIT model) should be answered:

What are the top 3 alternatives according to total value?

For a specified alternative, which ob jective contributes to its total value the most?

What is the domain value of objective x for alternative y?

What is the best alternative when considering only objective x?

What is the best outcome for a objective x?

Mapped to the house domain, for example, we get the following tasks:

List the 3 highest valued houses.

For HouseX, which is its strongest factor according to your preferences?

How many bathrooms are there in House1?

Which is the least expensive house?

What is the best bus-distance?

The subjects had to test the ValueCharts+ in the sensitivity analysis and inspection phase — no questions directed at the experimenter were allowed. Test domain was a set of hotels in the Vancouver area. 20 subjects were tested, 10 tested the VC+H and the remaining 10 the VC+V. Each subject performed each task, writing down the answer to applicable tasks that asked a question about the data. This procedure was re-iterated 5 times. The authors looked closely at their results to find an indication of whether one version of VC+ was a better fit than the other during the decision making process.

Finally, in the second step of the analysis the authors determined, for each task, what interface the subjects performed better. VC+V performed better on all five inspection tasks and also performed better on three out of the four sensitivity analysis tasks.

Evaluation Part B: User Study

In Part B, the authors followed a more qualitative approach by observing subjects using the tool in a real decision-making context. In this second part of the study, they attempted to measure the users’ insight in the decision problem. Once the subjects had completed Part B, they filled out a questionnaire regarding their experience with VC+ in the decision-making process. In our exploratory study we measure the amount of insight each sub ject gains from using VC+ for a particular decision-making scenario. In the exploratory study the amount of insight each sub ject gains from using VC+ for a particular decision-making scenario is measured. Insights are characterized by the following:

Fact: The actual finding about the data (e.g. “Samsung [cell phones] are the smallest”)

Value: How to measure each insight? The authors determined and coded the value of each insight from 1 - 3, whereas simple observations of domain value and top ranking (e.g. “cheapest place is in East Van”) are fairly trivial, and more global observations regarding relationships and comparison (e.g. “more expensive phones have all the features”) are more valuable.

Category: Insights were grouped into several categories:

– Simple fact: an alternative rank or identification of domain value e.g. “This phone is fairly light”, “This phone is only [ranked] fourth for battery”

– Sensitivity: how a change affects the results e.g. “This house again!”, “Now this phone is third”

– Realization of personal preferences: users often stated that they made a realization about their preferences e.g. “it makes sense, because I really like hiking and nature”, “brand should be more important [to me]”

The Datasets the subjects were allowed to choose were: house rental, cell phone and tourism.

There were more insights counted for the vertical interface, which also fared better when value factor was considered. There were more sensitivity analysis related value-changes in the vertical version, because the interface was more inviting to do so.

In a post-study questionnaire it turned out, that all of the subjects were generally satisfied with their decision. Subjects felt that VC+ was a good tool for learning about their preferences in the selected domain. This was tied closely to insights as well, as we found a significant positive correlation between the rating of this question and insight. Persons, that didn't have much exposure to decision analysis reported that they learned how to analize a decision model. All subjects thought that VC+ is useful, intuitive, easy to use and quick to learn. However, the lack of statistical significance for the difference in insights (count and value) indicates the need for a larger experiment.

References

An empirical evaluation of interactive visualizations for preferential choice

Link Exchange : thiet ke website vietnam airlines